If we want to start with a topic before getting into deep learning, the perceptron is a good place to start, as it is the basic unit with which artificial neural networks (ANNs) are built. We can then use multiple perceptrons in parallel to form a dense layer. By using multiple dense layers, we can build a deep neural network (DNN).

The objective of simple linear regression is to predict the target data based on the input data

where is the true function, but it is unknown and fixed, and is a intrinsic noise independent of .

Purpose of this Notebook:

Create a dataset for simple linear regression task

Create our own Perceptron class from scratch

Calculate the gradient descent from scratch

Train our Perceptron

Compare our Perceptron to the one prebuilt by PyTorch

Setup¶

print('Start package installation...')Start package installation...

%%capture

%pip install torch

%pip install scikit-learnprint('Packages installed successfully!')Packages installed successfully!

import torch

from torch import nn

from platform import python_version

python_version(), torch.__version__('3.12.12', '2.9.0+cu128')device = 'cpu'

if torch.cuda.is_available():

device = 'cuda'

device'cpu'torch.set_default_dtype(torch.float64)def add_to_class(Class):

"""Register functions as methods in created class."""

def wrapper(obj): setattr(Class, obj.__name__, obj)

return wrapperDataset¶

create dataset¶

We assume that predicts , and is independent and identical distributed (idd assumption). Independent means that two samples do not statistically depende on each other, and identical distributed means that all is distributed from the same unknown distribution.

The input data can be represented as a vector

and the target data can be also represented as a vector

from sklearn.datasets import make_regression

import random

M: int = 10_100 # number of samples

X, Y = make_regression(

n_samples=M,

n_features=1,

n_targets=1,

bias=random.random(), # random true bias

noise=1

)

X = X.squeeze() # remove the axis of length 1

print(X.shape)

print(Y.shape)(10100,)

(10100,)

split dataset¶

We are going to split the dataset into two sets, the training dataset , validation dataset and test dataset .

Train dataset is used to fit model parameters

Validation dataset us used to adjust hyperparameters, select models or evaluate training

Test dataset is utilized for the purpose of evaluating our pre-trained models

Remark: , and are disjoint, .

Let’s refer as training input and target data respectively, as validation input and target data respectively, and as test input and target data respectively.

X_train = torch.tensor(X[:100], device=device)

Y_train = torch.tensor(Y[:100], device=device)

X_train.shape, Y_train.shape(torch.Size([100]), torch.Size([100]))X_valid = torch.tensor(X[100:], device=device)

Y_valid = torch.tensor(Y[100:], device=device)

X_valid.shape, Y_valid.shape(torch.Size([10000]), torch.Size([10000]))⭐️ We are going to leave out the test dataset for now.

delete raw dataset¶

del X

del YScratch simple perceptron¶

weight and bias¶

Our model has two trainable parameters , which are called bias and weight respectively.

class SimpleLinearRegression:

def __init__(self) -> None:

self.b = torch.randn(1, device=device)

self.w = torch.randn(1, device=device)

def copy_params(self, torch_layer: nn.modules.linear.Linear) -> None:

"""

Copy the parameters from a module.linear to this model.

Args:

torch_layer: Pytorch module from which to copy the parameters.

"""

self.b.copy_(torch_layer.bias.detach().clone())

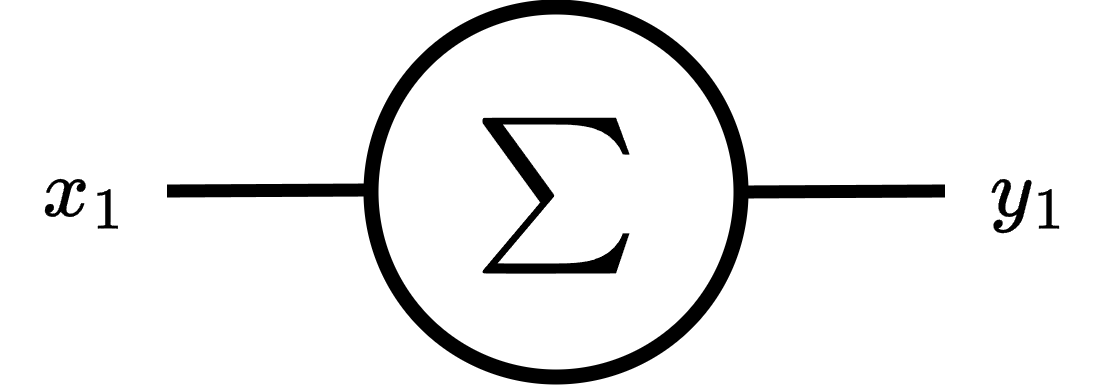

self.w.copy_(torch_layer.weight[0,:].detach().clone())weighted sum¶

We selected the weighted sum for as a linear approximation of the true function

where is some input (not be necessary the training).

Note: we remark with bold because given a vector , is a vector too.

Remark: We can add a scalar to a vector by broadcasting mechanism.

@add_to_class(SimpleLinearRegression)

def predict(self, x: torch.Tensor) -> torch.Tensor:

"""

Predict the output for input x.

Args:

x: Input tensor of shape (n_samples,).

Returns:

y_pred: Predicted output tensor of shape (n_samples,).

"""

return self.b + self.w * xMSE¶

We need a loss function to help guide the adjustment of our parameters during training. We will use Mean Squared Error (MSE) as loss function

MSE is defined as

or using a vectorized form

where is the Euclidean norm or is also called norm (L2 norm).

@add_to_class(SimpleLinearRegression)

def mse_loss(self, y_true: torch.Tensor, y_pred: torch.Tensor):

"""

MSE loss function between target y_true and y_pred.

Args:

y_true: Target tensor of shape (n_samples,).

y_pred: Predicted tensor of shape (n_samples,).

Returns:

loss: MSE loss between predictions and true values.

"""

return ((y_pred - y_true)**2).mean().item()

@add_to_class(SimpleLinearRegression)

def evaluate(self, x: torch.Tensor, y_true: torch.Tensor):

"""

Evaluate the model on input x and target y_true using MSE.

Args:

x: Input tensor of shape (n_samples,).

y_true: Target tensor of shape (n_samples,).

Returns:

loss: MSE loss between predictions and true values.

"""

y_pred = self.predict(x)

return self.mse_loss(y_true, y_pred)gradients¶

To make adjustments to our model, it is necessary to compute derivatives.

First, determine the derivatives to be computed

Then, ascertain the size of each derivative

Finally, compute the derivatives

⭐️ We are using Einstein notation, that implies summation. For example

we will use Einstein notation for chain rule summation, for example

Using chain rule, we can determine the derivatives we need. Gradient of MSE respect to bias is

Gradient of MSE respect to weight is

Okay, we have determined the derivatives we need. Now let’s calculate their shapes

All right, now we can compute each derivative.

MSE derivative¶

The vectorized form is

weighted sum derivative¶

respect to bias¶

respect to weight¶

Then, the vectorized form is

full chain rule¶

Derivative of MSE respect to bias is

Derivative of MSE respect to weight is

Note: We are using dot product as inner product .

Remark: Einstein notation implies summation

final gradients¶

parameters update¶

Now, let’s update the trainable parameters using gradient descent (GD) as follows

where is called learning rate.

@add_to_class(SimpleLinearRegression)

def update(self, x: torch.Tensor, y_true: torch.Tensor,

y_pred: torch.Tensor, lr: float):

"""

Update the model parameters.

Args:

x: Input tensor of shape (n_samples,).

y_true: Target tensor of shape (n_samples,).

y_pred: Predicted output tensor of shape (n_samples,).

lr: Learning rate.

"""

delta = 2 * (y_pred - y_true) / len(y_true)

self.b -= lr * delta.sum()

self.w -= lr * torch.dot(delta, x)gradient descent¶

We will use mini-batch gradient descent (mini-batch GD) to adjust the parameters of our model

where:

is the number of epochs

is an arbitrary model’s parameter, in our case are and

is the number of samples per minibatch

and are the -th to -th train samples

Note: are called hyperparameters, because they are adjusted by the developer rather than the model.

To learn more about types of gradient descents, please see gradient descents.

@add_to_class(SimpleLinearRegression)

def fit(self, x: torch.Tensor, y: torch.Tensor,

epochs: int, lr: float, batch_size: int,

x_valid: torch.Tensor, y_valid: torch.Tensor):

"""

Fit the model using gradient descent.

Args:

x: Input tensor of shape (n_samples,).

y: Target tensor of shape (n_samples,).

epochs: Number of epochs to fit.

lr: learning rate.

batch_size: Int number of batch.

x_valid: Input tensor of shape (n_valid_samples,).

y_valid: Target tensor of shape (n_valid_samples,).

"""

for epoch in range(epochs):

loss = []

for batch in range(0, len(y), batch_size):

end_batch = batch + batch_size

y_pred = self.predict(x[batch:end_batch])

loss.append(self.mse_loss(

y[batch:end_batch],

y_pred

))

self.update(

x[batch:end_batch],

y[batch:end_batch],

y_pred,

lr

)

loss = round(sum(loss) / len(loss), 4)

loss_v = round(self.evaluate(x_valid, y_valid), 4)

print(f'epoch: {epoch} - MSE: {loss} - MSE_v: {loss_v}')Scrath vs Torch.nn¶

We will be implementing a model created with PyTorch’s pre-built classes for linear regression. This will allow us to compare our model from scratch with the PyTorch model.

Torch.nn model¶

class TorchLinearRegression(nn.Module):

def __init__(self, n_features):

super(TorchLinearRegression, self).__init__()

self.layer = nn.Linear(n_features, 1, device=device)

self.loss = nn.MSELoss()

def forward(self, x):

return self.layer(x)

def evaluate(self, x, y):

self.eval()

with torch.no_grad():

y_pred = self.forward(x)

return self.loss(y_pred, y).item()

def fit(self, x, y, epochs, lr, batch_size, x_valid, y_valid):

optimizer = torch.optim.SGD(self.parameters(), lr=lr)

for epoch in range(epochs):

loss_t = [] # train loss

for batch in range(0, len(y), batch_size):

end_batch = batch + batch_size

y_pred = self.forward(x[batch:end_batch])

loss = self.loss(y_pred, y[batch:end_batch])

loss_t.append(loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

loss_t = round(sum(loss_t) / len(loss_t), 4)

loss_v = round(self.evaluate(x_valid, y_valid), 4)

print(f'epoch: {epoch} - MSE: {loss_t} - MSE_v: {loss_v}')

optimizer.zero_grad()torch_model = TorchLinearRegression(1)scratch model¶

model = SimpleLinearRegression()evals¶

We will use a metric to compare our model with the PyTorch model.

import MAPE modified¶

# This cell imports torch_mape

# if you are running this notebook locally

# or from Google Colab.

import os

import sys

module_path = os.path.abspath(os.path.join('..'))

if module_path not in sys.path:

sys.path.append(module_path)

try:

from tools.torch_metrics import torch_mape as mape

print('mape imported locally.')

except ModuleNotFoundError:

import subprocess

repo_url = 'https://raw.githubusercontent.com/PilotLeoYan/inside-deep-learning/main/content/tools/torch_metrics.py'

local_file = 'torch_metrics.py'

subprocess.run(['wget', repo_url, '-O', local_file], check=True)

try:

from torch_metrics import torch_mape as mape # type: ignore

print('mape imported from GitHub.')

except Exception as e:

print(e)mape imported locally.

predictions¶

Let’s compare the predictions of our model and PyTorch’s using modified MAPE.

mape(

model.predict(X_valid),

torch_model.forward(X_valid.unsqueeze(-1)).squeeze(-1)

)9.959147478579956They differ considerably because each model has its own parameters initialized randomly and independently of the other model.

copy parameters¶

We copy the values of the PyTorch model parameters to our model.

model.copy_params(torch_model.layer)predictions after copy parameters¶

We measure the difference between the predictions of both models again.

mape(

model.predict(X_valid),

torch_model.forward(X_valid.unsqueeze(-1)).squeeze(-1)

)0.0We can see that their predictions do not differ greatly.

loss¶

mape(

model.evaluate(X_valid, Y_valid),

torch_model.evaluate(X_valid.unsqueeze(-1), Y_valid.unsqueeze(-1))

)0.0training¶

We are going to train both models using the same hyperparameters’ value. If our model is well designed, then starting from the same parameters it should arrive at the same parameters’ values as the PyTorch model after training.

LR: float = 0.01 # learning rate

EPOCHS: int = 16 # number of epochs

BATCH: int = len(X_train) // 3 # number of minibatchtorch_model.fit(

X_train.unsqueeze(-1),

Y_train.unsqueeze(-1),

EPOCHS, LR, BATCH,

X_valid.unsqueeze(-1),

Y_valid.unsqueeze(-1)

)epoch: 0 - MSE: 3858.4284 - MSE_v: 3678.3555

epoch: 1 - MSE: 3279.5901 - MSE_v: 3158.1161

epoch: 2 - MSE: 2791.5492 - MSE_v: 2715.3176

epoch: 3 - MSE: 2379.5309 - MSE_v: 2337.8917

epoch: 4 - MSE: 2031.2332 - MSE_v: 2015.7238

epoch: 5 - MSE: 1736.4046 - MSE_v: 1740.3273

epoch: 6 - MSE: 1486.4949 - MSE_v: 1504.5734

epoch: 7 - MSE: 1274.3664 - MSE_v: 1302.4666

epoch: 8 - MSE: 1094.0545 - MSE_v: 1128.9585

epoch: 9 - MSE: 940.5701 - MSE_v: 979.7926

epoch: 10 - MSE: 809.736 - MSE_v: 851.3757

epoch: 11 - MSE: 698.0505 - MSE_v: 740.6702

epoch: 12 - MSE: 602.575 - MSE_v: 645.105

epoch: 13 - MSE: 520.8409 - MSE_v: 562.5008

epoch: 14 - MSE: 450.7716 - MSE_v: 491.0079

epoch: 15 - MSE: 390.6182 - MSE_v: 429.0538

model.fit(

X_train, Y_train,

EPOCHS, LR, BATCH,

X_valid, Y_valid

)epoch: 0 - MSE: 3858.4284 - MSE_v: 3678.3555

epoch: 1 - MSE: 3279.5901 - MSE_v: 3158.1161

epoch: 2 - MSE: 2791.5492 - MSE_v: 2715.3176

epoch: 3 - MSE: 2379.5309 - MSE_v: 2337.8917

epoch: 4 - MSE: 2031.2332 - MSE_v: 2015.7238

epoch: 5 - MSE: 1736.4046 - MSE_v: 1740.3273

epoch: 6 - MSE: 1486.4949 - MSE_v: 1504.5734

epoch: 7 - MSE: 1274.3664 - MSE_v: 1302.4666

epoch: 8 - MSE: 1094.0545 - MSE_v: 1128.9585

epoch: 9 - MSE: 940.5701 - MSE_v: 979.7926

epoch: 10 - MSE: 809.736 - MSE_v: 851.3757

epoch: 11 - MSE: 698.0505 - MSE_v: 740.6702

epoch: 12 - MSE: 602.575 - MSE_v: 645.105

epoch: 13 - MSE: 520.8409 - MSE_v: 562.5008

epoch: 14 - MSE: 450.7716 - MSE_v: 491.0079

epoch: 15 - MSE: 390.6182 - MSE_v: 429.0538

predictions after training¶

mape(

model.predict(X_valid),

torch_model.forward(X_valid.unsqueeze(-1)).squeeze(-1)

)0.0bias¶

We directly measure the difference between the bias values of both models.

mape(

model.b.clone(),

torch_model.layer.bias.detach()

)0.0weight¶

And measure the difference between the weight values of both models.

mape(

model.w.clone(),

torch_model.layer.weight.detach().squeeze(0)

)0.0All right, our scrath simple linear regression is well done respect to PyTorch’s implementation.